Multi-agent systems don’t fail in demos,they fail in real-world conditions. In one system we built, agents got stuck in loops. In another, they overwrote each other’s work. The problem isn’t the model. It’s the system design.

Most teams treat multi agent systems as a scaling shortcut. They split one agent into many and expect better results. In practice, this creates coordination issues: duplicate work, conflicting actions, and loops. The problem is not the model. It’s the system.

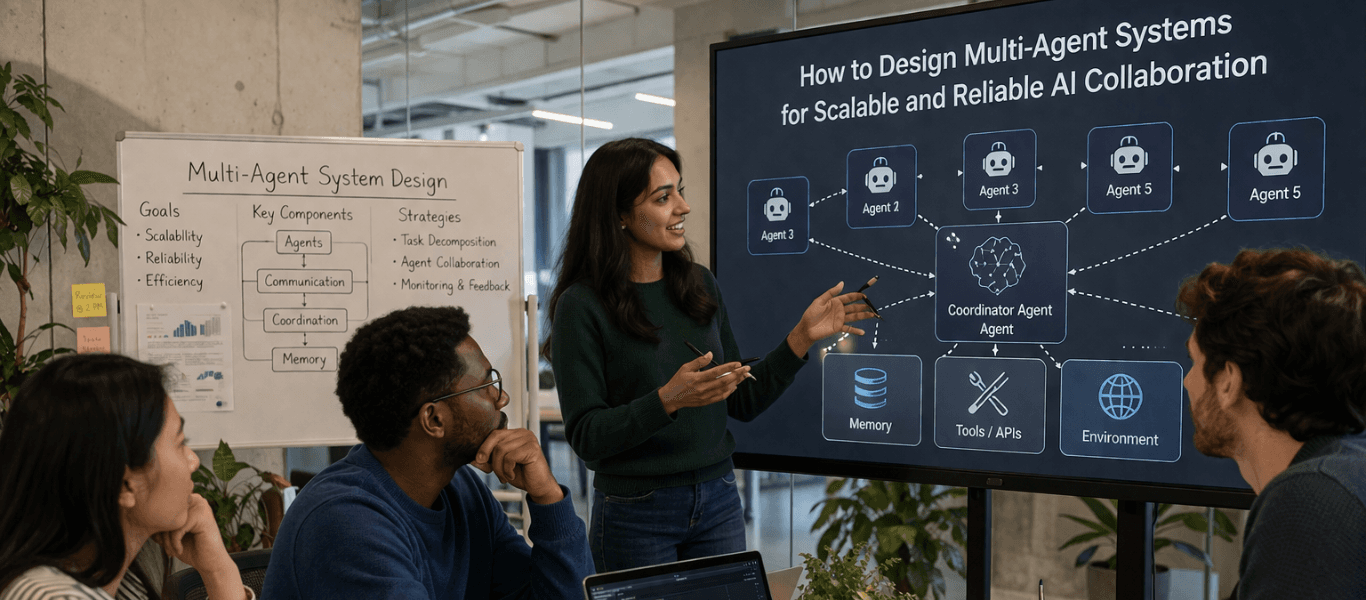

A production-grade multi agent system in AI needs structure. Clear roles, controlled interactions, and defined workflows. This is where multi agent system architecture matters. Without it, even strong multi agent LLM setups become unreliable as complexity grows.

As per the recent source by Gartner, it is predicted that by 2030, performing inference on an LLM with 1 trillion parameters will cost GenAI providers over 90% less than in 2025.

This guide covers how to approach multi agent system design properly in terms of architecture, key multi agent system design patterns, and the rules that keep AI multi agent systems scalable and stable.

Do You Actually Need Multiple Agents?

A multi agent AI system is not the default upgrade from a single agent. In many cases, a well-designed single agent with the right tools works better.

Start with the simplest system that works. Add AI agents only when you need:

- Clear specialization (domain or function)

- Context separation

- Parallel execution

- Permission/control boundaries

- Maker-checker validation loops

These are the real drivers behind effective multi agent systems.

A common mistake in multi agent AI system design is splitting agents without clear roles. This increases coordination overhead without improving output. Use a multi agent system in AI when it reduces complexity, not when it adds it.

The takeaway: If one agent can handle the workflow cleanly, don’t introduce more.

Architecture Rule: One Agent, One Clear Responsibility

Every agent in a multi agent AI system should do one thing well. Define for each agent:

- A single job

- Bounded tools

- A clear input/output contract

Avoid overlapping roles. That’s where most multi agent systems break: duplicate work, conflicting decisions, and unclear routing.

Good boundaries in multi agent system design are based on:

- Domain (research, pricing, compliance)

- Permission (read vs write)

- Output type (analysis vs execution)

This is the foundation of a stable multi-agent AI system architecture.

A simple spec per agent helps:

- Purpose

- Tools allowed

- Context scope

- Output schema

- Fallback behavior

Put simply, if two agents can do the same job, the system will degrade over time.

Core Multi-Agent Design Patterns

There are four multi-agent design patterns. Choose patterns based on failure modes, not preference.

1. Supervisor (Manager) Pattern

One agent controls the workflow and delegates tasks to specialist agents. This works well when you need control, consistency, and auditability in a multi agent AI system.

Example: A customer support system where a supervisor agent routes queries to billing, technical, or account agents.

The tradeoff is bottlenecks. If the supervisor handles too much, the system slows down.

2. Peer Handoff Pattern

Agents pass control directly to one another based on the task. This fits dynamic workflows in AI multi agent systems where routing changes as the work progresses.

Example: A customer support system where a supervisor agent routes queries to billing, technical, or account agents.

The tradeoff is visibility. Handoffs can become hard to trace and easier to loop.

3. Orchestrator-Worker Pattern

One agent breaks the task into parts, workers run them, and the system combines the results. This is a strong fit for research, analysis, and parallel-heavy multi agent AI system workflow design.

Example: A research system where multiple agents analyze different sources in parallel, then a final agent synthesizes the output.

This pattern improves throughput when subtasks are independent.

4. Maker-Checker Pattern

One agent produces the output. Another reviews or validates it. This is one of the most practical multi agent system design patterns for reliability.

Example: A code generation system where one agent writes code and another reviews for correctness and security.

The tradeoff is cost. More checks improve quality, but they also add latency and compute.

Choosing the Right Pattern

To choose the right pattern, you need to consider task predictability, need for parallelism, level of control required, and the cost of failure.

- Use a Supervisor pattern when control matters more than speed. It works well when you need strict routing, centralized policy enforcement, and predictable behavior in a multi agent AI system architecture.

- Use Peer Handoffs when routing is fluid and domains are clearly separated. This fits systems where agents need to pass work naturally without a central controller.

- Use Orchestrator-Worker when the task can be broken into parallel subtasks. This is one of the most effective multi agent AI system architecture patterns for research, analysis, and batch-heavy workflows.

- Use Maker-Checker when output quality matters more than first-pass speed. It’s useful when you need validation before the system returns or executes anything.

A good multi agent system design starts with one question: where is this system most likely to fail?

The takeaway: Pattern choice is a failure-mode decision, not a style choice.

Context Design: Prevent Agents from Stepping on Each Other

Most multi agent systems fail when agents see too much context or too little. Passing full transcripts across the system usually adds noise, cost, and confusion.

A good multi agent AI system design separates:

- Shared state

- Local state

- Retrieved context

Each agent should get only the context it needs to do its job. Passing full conversation history usually adds noise and increases cost. Instead of sharing raw transcripts, pass a structured payload between agents:

- Task → what needs to be done

- Constraints → rules or limits to follow

- Relevant data → only the inputs required for this step

- Output format → how the result should be structured

This keeps the multi agent AI system workflow cleaner and reduces routing errors. Context should be scoped by responsibility, not copied by default.

Tooling Design: Keep Agents Focused

Tools define what your multi agent AI system can actually do. Poor tool design creates confusion, retries, and unpredictable behavior.

Each agent should have access to only the tools it needs. This keeps decisions cleaner and reduces errors across multi agent systems.

Design tools as contracts:

- Clear purpose

- Strict input/output schema

- Predictable responses

Avoid giving all agents access to all tools. That breaks control in a multi agent AI system architecture and leads to misuse. This is especially important in complex multi agent AI system workflow setups, where multiple agents interact with the same systems.

In short: fewer, well-defined tools lead to better decisions than broad access.

Reliability: Control Failure Before it Scales

Reliability in a multi agent system design comes from explicit limits, not prompts. A multi agent AI system does not fail in one place. It fails across routing, handoffs, context, and tool use.

Official guidance across LangChain and Azure both stresses that multi-agent setups add coordination overhead, and that handoffs and shared context need deliberate control.

Common failure modes in multi agent systems include:

- Duplicate work

- Infinite handoffs

- Context drift

- Tool misuse

- Silent sub-agent failure

These issues usually come from weak boundaries, bloated context, or unclear routing. The guardrails are simple. Set:

- Iteration limits

- Timeouts

- Schema validation

- Role-based tool access

- Deterministic checkpoints

These controls matter even more in complex multi agent AI system workflow setups, where one bad step can cascade across the system. Azure’s current guidance also warns against unnecessary complexity and recommends using the lowest complexity level that reliably meets the requirement.

The takeaway: Multi-agent systems fail across boundaries, not inside a single agent.

Observability: You Can’t Debug What You Can’t See

You cannot debug a multi agent AI system by reading the final answer. Once multiple agents, tools, and handoffs are involved, you need traces across the full workflow. At minimum, log:

- Routing decisions

- Handoffs

- Tool calls

- Intermediate outputs

- Latency and cost per step

This is how you improve multi agent AI system performance over time. It also helps you catch loops, bad routing, and noisy context before they spread through the system. Set distinct agent identities and telemetry that lets you separate agent behavior inside larger applications.

For multi-agent AI system development, observability is not a nice-to-have. It is the only way to see what actually happened.

So, final outputs alone are useless for debugging. If you can’t trace the workflow, you can’t operate the system confidently.

Evaluation: Test the System, Not Just the Agents

A multi agent AI system should be evaluated at two levels: agent-level and workflow-level. At the agent level, test whether each agent is doing its job correctly:

- Choosing the right tool

- Following the expected schema

- Producing the right type of output

At the workflow level, test whether the full system behaves correctly:

- The right agent gets selected

- Handoffs happen when they should

- The final output is accurate and complete

This is where many multi-agent systems fail. Individual agents may work well on their own, but the full workflow still breaks because coordination is weak.

For strong multi agent AI system design, track:

- Task success

- Output quality

- Latency

- Cost

- Loop rate

Ultimately, you need to evaluate the full workflow, not just the parts inside it.

Suggested Read

How We Built a Multi-Agent AI Platform for Enterprise Knowledge Search

View Case Study

Reference Architecture (Production-Ready)

A production multi agent AI system architecture is not a loose collection of agents. It’s a structured workflow with clear roles and controlled interactions that looks like this:

User → Router → Orchestrator → Specialists → Synthesizer → Checker → Response

Each step has a defined purpose:

- Router: Directs the request to the right workflow or agent

- Orchestrator: Breaks the task into steps and manages execution

- Specialist Agents: Handle domain-specific work (retrieval, analysis, execution)

- Synthesizer: Combines outputs into a coherent result

- Checker: Validates the output before it is returned

This structure reflects common multi agent AI system architecture patterns used in production.

Here are the key design principles:

- Clear Role Separation: Each agent has a defined responsibility

- Controlled Handoffs: Transitions between agents are explicit, not implicit

- Parallel Where Possible: Independent tasks run concurrently to improve throughput

- Validation Before Output: The system checks results before returning them

- Traceability Across Steps: Every action is observable and debuggable

This is what a stable multi agent AI system workflow looks like in practice. It’s not about adding more agents but about structuring how they collaborate.

A good architecture reduces coordination overhead instead of increasing it.

How SoluteLabs Approaches Building Multi-Agent Systems?

At SoluteLabs, we don’t treat a multi agent AI system as a collection of agents. We treat it as a controlled workflow built using our Gen 3 methodology.

The structure is simple and consistent:

- Context: Define what the system needs to know. Scope the data, inputs, and constraints clearly.

- Planning: Break the task into steps. Decide which agents are involved and how work flows between them.

- Execution: Agents perform their tasks using defined tools, roles, and boundaries within the multi agent AI system architecture.

- Verification: Validate outputs before they move forward or reach the user. This includes checks, review loops, and fallback logic.

This approach keeps multi agent systems predictable. Each stage has a clear purpose, and failures are contained instead of spreading across the workflow. This way, your multi-agent system operates as a structured pipeline, not a loosely connected set of agents.

Best Practices for Scalable Multi-Agent Systems

Scale comes from discipline in the design, not from the number of agents. To drive that discipline, you need to keep:

- Agents Narrow: A multi agent AI system scales better when each agent has one clear job and a limited scope.

- Prompts Spec-driven: Good multi agent system design depends on clear instructions, structured outputs, and defined responsibilities.

- Handoffs Structured: Pass only the task, constraints, relevant context, and expected output. This keeps the multi agent AI system workflow clean and predictable.

- Tool Access Minimal: Agents should only use the tools they actually need. This improves control across AI multi agent systems.

- State Ownership Clear: Shared state should be intentional, not accidental. Each agent should know what it owns and what it only reads.

- Humans in the Loop Where Needed: For high-impact tasks, review and validation matter more than full autonomy.

- Evals and Observability Close to Shipping: Don’t treat them as cleanup work after the system is built.

- Architecture as Simple as Possible: A good multi agent AI system architecture grows by solving real problems, not by adding unnecessary layers.

Wrapping Up

A multi agent AI system scales only when responsibility is clearly defined.

The real work in multi agent system design is not adding more agents. It is setting clean boundaries, choosing the right coordination pattern, controlling context, and making the workflow observable.

Start simple. Add agents only where they reduce complexity, not where they multiply it.

If you need help deploying multi-agent AI systems or evaluating your current setup, reach out to us. We build production-grade multi-agent AI systems that actually hold up in real workflows.